Press releases

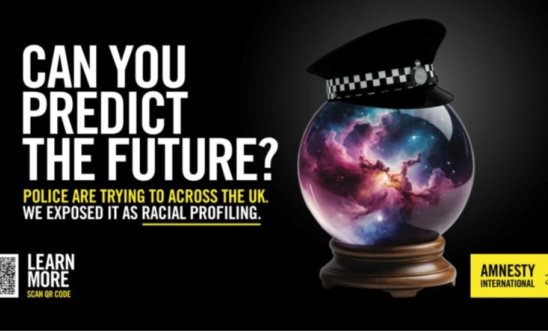

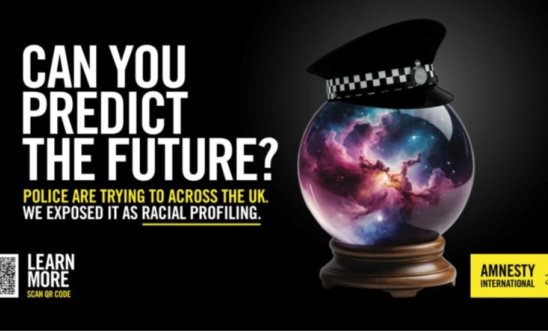

UK: TFL block Amnesty adverts to hide warnings over crime-predicting technology

Amnesty advert alerts residents of South London to the problematic predictive policing happening in their communities

Transport for London rejected the advert, stating ‘it might bring other members of the Greater London Authority Group (GLA) into disrepute’

Research revealed that Met Police (a member of the GLA Group) attempt to predict the future by labelling people as ‘suspects’ without them ever having offended or committed a crime

The Met had the highest rate of stop and search encounters for people of ‘black ethnic appearance’ per 1,000 population of any ethnic group

‘Transport for London, and its Chair Mayor Sadiq Khan, are in danger of being complicit in a cover up of harmful Met Police crime predicting technology’ - Sacha Deshmukh

Amnesty International UK has sharply criticised Transport for London (TFL) for preventing them from displaying adverts that would inform South London residents that ‘predictive policing’ is occurring on their streets.

Amnesty had booked advertising space in Elephant and Castle tube station highlighting the findings of their new research which exposed the prevalence of racial profiling technology and its use by police forces across the UK.

The aim was to alert the public to Amnesty’s damning conclusions in their 120 - page report Automated Racism – How police data and algorithms code discrimination into policing’ which exposes the grave dangers to society from ‘predictive policing’ systems and technology used across almost three quarters of the UK’s police forces.

This is the first report to demonstrate how these systems are in flagrant breach of the UK’s national and international human rights obligations.

Amnesty found that at least 33 police forces – including the Metropolitan police and British Transport police - across the UK have used predictive profiling or risk prediction systems. Of these forces, 32 have used geographic crime prediction, profiling, or risk prediction tools, and 11 forces have used individual prediction, profiling, or risk prediction tools.

Transport for London rejected the adverts, stating ’it might bring other members of the GLA Group into disrepute’ which would include the Mayor’s office of crime and policing.

Sacha Deshmukh, Amnesty International UK’s Chief Executive, said:

“Transport for London and its Chair Mayor Sadiq Khan are in danger of being complicit in a cover up by preventing police transparency and community awareness about harmful crime predicting technology.

“Protecting members of the GLA, such as the Mayor's office of crime and policing, just creates more of a shroud of secrecy. A shocking lack of transparency already exists about these crime-predicting technologies, and public policing should be open to critique and accountability.

“The use of predictive policing tools violates human rights. The evidence that this technology keeps us safe is just not there; the evidence that it violates our fundamental rights is clear as day. We are all much more than computer-generated risk scores.

“These technologies have consequences. The future they are creating is one where technology decides that our neighbours are criminals, purely based on the colour of their skin or their socio-economic background.

“These tools to 'predict crime' harm us all by treating entire communities as potential criminals, making society more racist and unfair.

“TFL have made the wrong call in preventing us from advertising, and we are calling on the Mayor of London to reverse it.”

Racist and failing systems in London

Risk Terrain Monitoring (RTM) is a predictive policing system that processes police acquired data to generate a location-based risk score.

An initial period of RTM-influenced policing targeted the north of the boroughs of Lambeth and Southwark, commencing in September 2020. Between December 2020 and October 2021, Lambeth had the second-highest volume of stop and searches of all London boroughs. In the same period, people of ‘black ethnic appearance’ (as defined by the Metropolitan Police Service) had the highest rate of stop and search encounters per 1,000 population of any ethnic group: they were stopped and searched more than four times than people of white ethnic appearance. Eighty per cent of these stops and searches resulted in no further action. In the same period, Lambeth had the second highest volume of police uses of force in all London boroughs, and police used force most against people recorded as ‘black or black British’.

In Southwark in the year ending March 2021, Black people were stopped and searched 3.3 times more than white people. Police used force against people in Southwark at least 8,924 times between September 2020 and September 2021, and 45 per cent of those times, it was against ‘black or black British’ people.

The Metropolitan Police Service’s Violence Harm Assessment profiles people based on intelligence reports and about people who are ‘suspects’, and an individual can be profiled without ever having offended or committed a crime.

The force has said that it will not inform any member of the public that they feature on the Violence Harm Assessment. It also says that data subject access requests from individuals asking if they are on the Violence Harm Assessment list will be considered ‘on a case-by-case basis against the statutory exemptions and the level of risk the individual presents and risks of notification to the individual’.

The Metropolitan Police Service has itself noted that issues with the Violence Harm Assessment include: the adultification of children; the Rationale for Suspect over Convictions and how using ‘suspect’ could risk racial disproportionality if wrongly named; and that this leads to a ‘possibility of disproportionality due to some communities/areas being “over policed” leading to greater reports’.

Human rights violations exposed

Racial profiling: The use of these systems by police results in, directly and indirectly, racial profiling and the disproportionate targeting of Black and racialised people and people from lower socio-economic backgrounds. This, in turn, leads to their increased criminalisation, punishment, and exposure to violent policing.

There is no right to a fair trial: Predictive systems target individuals and groups before they have actually committed an offense, which risks infringing on the presumption of innocence and the right to a fair trial.

Mass surveillance: This is indiscriminate and can never be proportionate interference with the rights to privacy, freedom of expression, freedom of association and of peaceful assembly.

Chilling effect: Individuals who live in areas targeted by predictive policing will likely seek to avoid those areas, resulting in a chilling effect. Participants in the Essex discussion group stated that if police were targeting specific areas, they would likely avoid those areas.

Recommendations

Amnesty is calling for:

-

A prohibition on predictive policing systems

-

Transparency obligations on data-based and data-driven systems being used by authorities, including a publicly accessible register with details of systems used.

-

Accountability obligations, including a right and a clear forum to challenge a predictive, profiling, or similar decision or consequences leading from such a decision.